Are You and Your Family Ready to Safely Deal with Voice Cloning Scams?

Artificial intelligence isn’t just enhancing the user experience on your iPhone or allowing you to converse in real time with chatbots like ChatGPT. Early last year, the FBI warned generative artificial intelligence is also being used by criminals to commit large-scale fraud.

In Public Service Announcement I-120324, the FBI detailed some of the ways criminals are capitalizing on artificial intelligence to assist their activities. AI-generated text can be used by criminals to create fake social media profiles, translate languages, and craft specifically tailored messages. AI-generated images can be used to dupe others via fake social media profiles and to create fake identification documents like driver’s licenses. More recently, AI-generated audio, also known as vocal cloning, is being used to trick family members into sending money and unwittingly giving up access to their bank accounts.

This is scary stuff. You may wonder how it’s possible to protect yourself and your loved ones from such a sophisticated attack. While nothing is 100 percent foolproof, there are some very basic precautions you and your family can take together to minimize the impact of a potential future AI fraud attempt.

The first step is to become informed and aware of the threat. You may receive a call from a family member asking for money that sounds just like them. If you and your family create a secret word or phrase for these situations, it’s a surefire way to verify their identity. So, take the time to discuss the existence of potential voice cloning scams and come up with your secret code. You can also simply hang up. While the idea of hanging up on family in dire straits seems a little rude, it’s for everyone’s protection. Contact the person back using the number you’d usually use to call them. If they don’t answer, it was likely an attempt at a scam. If they don’t know the secret word, there’s another reason to be suspicious and not send any money.

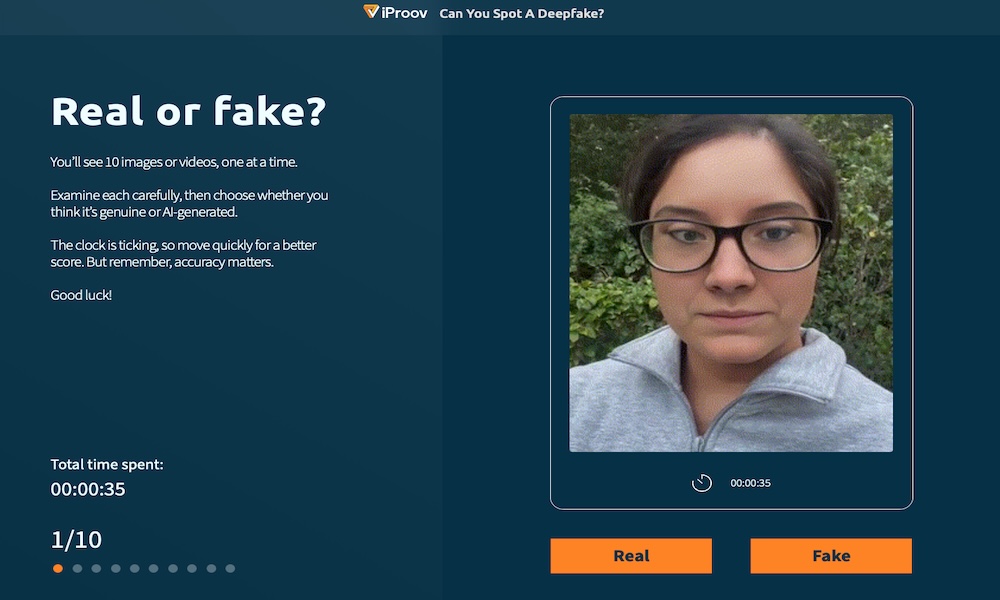

Remember, you must be on your toes. These calls will be convincing. Just like AI-generated images and videos, sometimes even trained professionals are duped. When on the phone with a loved one, listen closely to their words and tone to see if anything is off. Regarding images and videos that may be fake, keep an eye out for anything that looks unrealistic, like hands and feed, teeth, and eyes. You can measure your deepfake spotting skills by taking this quick test. We’re guessing you didn’t do as well as you thought you would.

There’s no doubt AI has a scary side when it comes to scammers and fraud. AI potentially puts our personal information, privacy, security, and financial security at risk. It’s difficult for almost everyone to distinguish real from fake images and voices, let alone the average person going about their day. We can only rely on technology companies like Apple to do so much. The rest is up to us. Safety begins with being informed and sharing this knowledge with your family and friends. Something as simple as a family “secret code” or password may help prevent significant loss.